Signal Debt: what would it mean to let good signal in?

Ward Cunningham coined the term technical debt in 1992 to describe the cost of choosing a simpler solution today that you’ll need to revisit tomorrow. It was a precise metaphor for a specific problem in software development. Then it escaped the engineering department and went mainstream - usually as a complaint. The system is slow, the feature is late, the codebase is a mess. Someone says technical debt and everyone nods as if that explains it.

Jabe Bloom’s framing of technical debt is one of the most clarifying. Technical debt isn’t a failure. It’s the natural cost of building under uncertainty. You make the best decision available with what you know, you ship, you learn, and you carry the cost forward. The debt isn’t the problem. Losing track of it is.

But every build is downstream of something. A decision. And every decision is downstream of something else - the quality of what your organisation thinks it knows about its customer.

So there is an equivalent debt. I’m just going to call it signal debt.

It doesn’t live in the codebase. It lives upstream of it - in the research, the synthesis, the insight decks, the quarterly themes. Everything that crossed from the outside world into your organisation before it ever reached a decision. Unlike technical debt, nobody decided to take it on as a debt. You don’t get anything for it. When a customer truth degrades in transit you don’t move faster or ship sooner. You just lose it. Nobody is accounting for it - nothing in the operating model is designed to.

Most organisations consult the customer at the start. Then build whatever they were going to build anyway. Not out of malice. Not out of laziness. The research happens. The interviews get done. The insight-driven strategy deck lands in inboxes across the organisation. And then gradually, locally, rationally, something else takes over. The transformation programme has momentum. The product roadmap has commitments. The data platform has a delivery date. The customer enters the process as a presence and exits as a percentage. By the time the decision gets made, the signal that was supposed to inform it has crossed so many boundaries - from researcher to analyst, from analyst to deck, from deck to meeting, from meeting to ticket - what arrives is the organisation talking to itself about what-someone-outside-once-said. It has forgotten what it has forgotten.

This has been happening for years. And AI is about to make it very expensive. Which might - finally - be the thing that forces a reckoning.

Every handoff or crossing destroys a little of the common ground (Klein via Bloom) - the shared understanding that lets people appear rational to each other even when nobody holds the full picture. Nobody is doing anything wrong. But by the time a signal reaches a decision, what-the-customer expressed is inaudible. Signal debt is what accumulates in the gap. And unlike technical debt, it can’t be refactored. When code is reclaimed, the logic comes with it. When a customer signal gets flattened, the thing that made it true is gone. There’s no undo function in the enterprise.

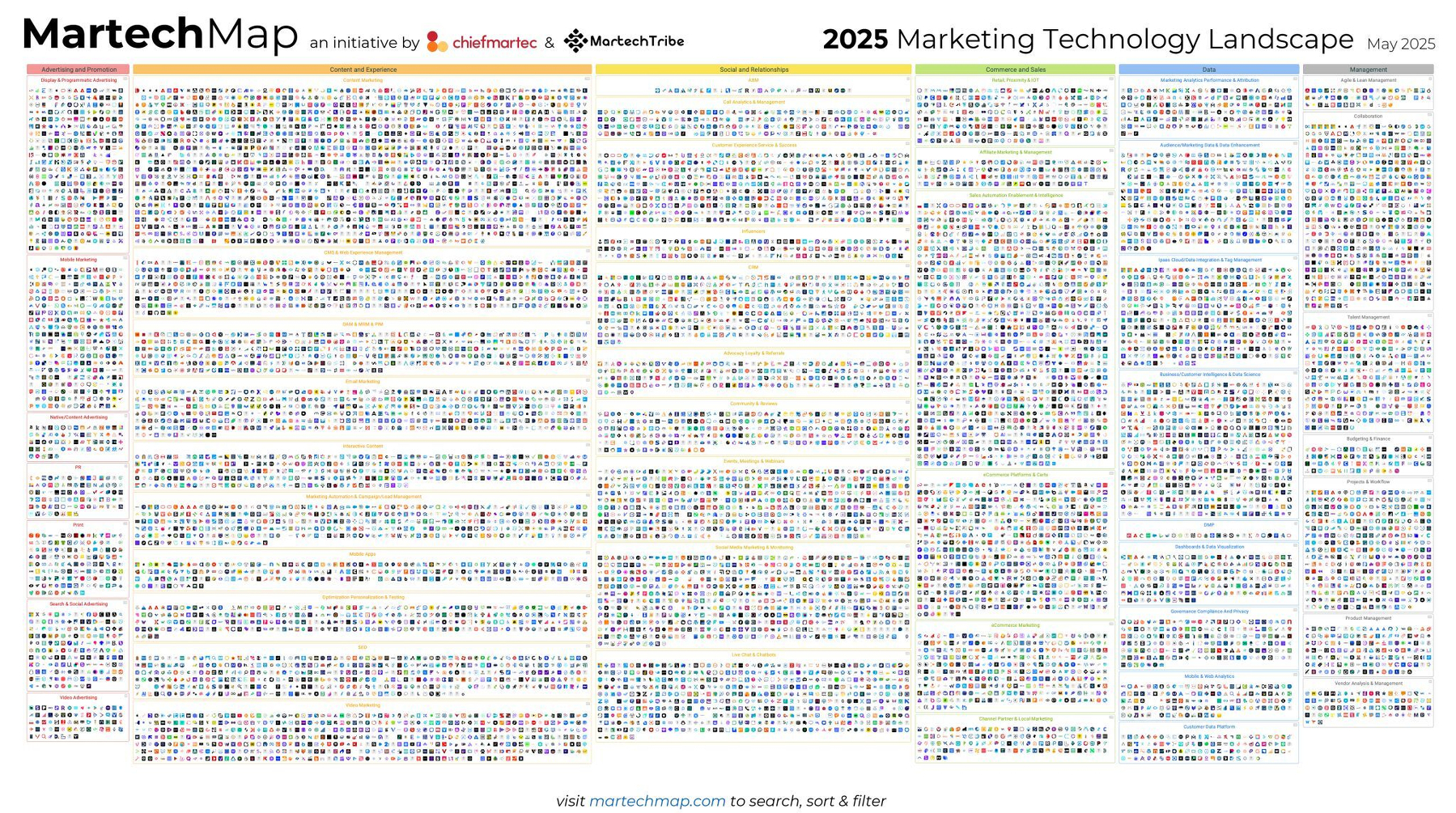

The customer estate - those teams organised around customer knowledge - is built to activate signal, not carry it. Look at the 2025 martech landscape for crying out loud. 15,000 products. 100x growth in fifteen years. Built for activation, not fidelity.

And because teams organise around activation they make locally rational decisions - what to capture, what to summarise, what to pass forward. Each step makes sense. None of them owns the crossing. (this is boundary objects again, but that’s for another post). The underlying architecture makes signal debt almost inevitable - systems that don’t speak to each other, data that doesn’t travel with its context, handoffs with no mechanism for checking what-arrives against what-was-sent.

And on top of all of that is something more dangerous still. “Customer”, the word, is doing too many jobs at once. It’s everything everywhere all at once — the strategy decks, the data platforms, the service models, the call centres, the slideware.

And because it’s everywhere, nobody questions it. It becomes a flashing neon sign rather than a person or persons that once-upon-a-time had a story to tell. Calling something ‘customer-this’ or ‘customer-that’ justifies internal momentum rather than being tightly coupled to something real arriving from the outside. It’s a multi-stakeholder activity where the customer estate plays exquisite corpse. Each function draws their section, folds the paper, passes it on. Nobody sees the whole. The risk isn’t that we disagree about the customer. It’s that we stop knowing we do.

[Ted Joans, Exquisite Corpse (1976–2008). 132 contributors across 32 years. MoMA]

And the customer themselves? The personhood that sees and fears and feels your product service experience - distant. Structurally remote. Getting to them - the fieldwork, the research, the synthesis - takes weeks, months. Everyone knows this. It’s just what the work involves. So knowledge gets batched. Commissioned, completed, distributed. Each snapshot becomes a million-parts-diluted version of ‘the customer’. That the organisation then acts on until the next one. And everything that follows - the commercial modelling, the transformation programme, the product operating model, the data platform, - flows from it. If the signal was thin when it was captured, or thinner still by the time it reached the decision, none of that changes anything fundamental. Nobody noticed that the input got degraded.

Leaders feel this without being able to name it. Something is slow that should be fast. Something is uncertain that should be knowable. The organisation is busy and the customer is still somehow not quite present in the room.

That’s signal debt at scale.

And now, ladies and gents, we have AI.

So what-the-organisation-thinks-it-knows-about-its-customer isn’t what-the-customer-expressed, but, yep, that’s what AI is running on. AI is being bolted onto the customer estate. Onto the CRM. Onto the insight function. Onto the content pipeline. Onto the decisioning layer. And because the output is fluent - structured, confident, plausible - it feels like progress. Unfortunately AI doesn’t repair signal debt. It makes it worse. Whatever model or fragment of ‘the customer’ it runs on, it then runs for you, at speed and at scale. If that model is thin or batch-processed or is the product of that exquisite corpse assembled across disconnected functions - then the output will be wrong in ways that are very hard to see but extremely easy to distribute.

The problem, in this situation at least, isn’t AI - it’s what AI is fed.

A genuinely high fidelity customer truth - qualitative, longitudinal, persisting across the crossings rather than degraded by them - would change what’s possible entirely. That’s not where most organisations are. And while signal debt goes unacknowledged, the AI initiative is just the fastest way yet to scale a polished, high-confidence mistake.

The architecture for signal fidelity exists. It has been proven. In operational environments - supply chains, logistics, fulfilment - observability is engineered into the stack. There the signal stays live and checkable at each crossing, not just at the end. Nothing arrives as an autopsy. When a store needs milk, the signal is clear and the supply response matches it. Nobody is arguing about whether the data can be trusted. That rigour isn’t happening at the customer boundary. Upstream, where the customer signal should be at its most vital, the transductive architecture is absent. Same organisation. Different estate. The crossings are unowned, the handoffs unchecked, the debt accumulating silently.

It isn’t that the problem is unsolvable. It’s that it isn’t a process failure with a root cause. It’s a condition - distributed across every crossing, diffuse by design. That’s why the answer isn’t better monitoring. It’s better observability.

Signal debt won’t appear on a balance sheet. It won’t show up in a sprint retrospective or a quarterly business review. It accumulates quietly - and divergently. The same compromised signal fans out across the organisation simultaneously: into the commercial model, the product roadmap, the data platform, the content pipeline, the customer insight function. Each team working from their version of it. Each one now with an AI assistant, running on that same thin input, at speed, at scale, confident in what arrived, unaware of what was lost. Not a single-point-of-failure-compounding-in-one-direction but a structural liability replicating across every surface.

What’s needed isn’t a new methodology - it’s a different relationship to the signal. Keeping faith with what was meant, not what survived the crossing.

Next time: what it would mean to carry a signal you could actually trust.

Follow along: mattburgess.micro.blog/subscribe… · mattburgess.micro.blog/feed.xml · micro.blog/mattburge… · Mastodon @mattburgess@micro.blog